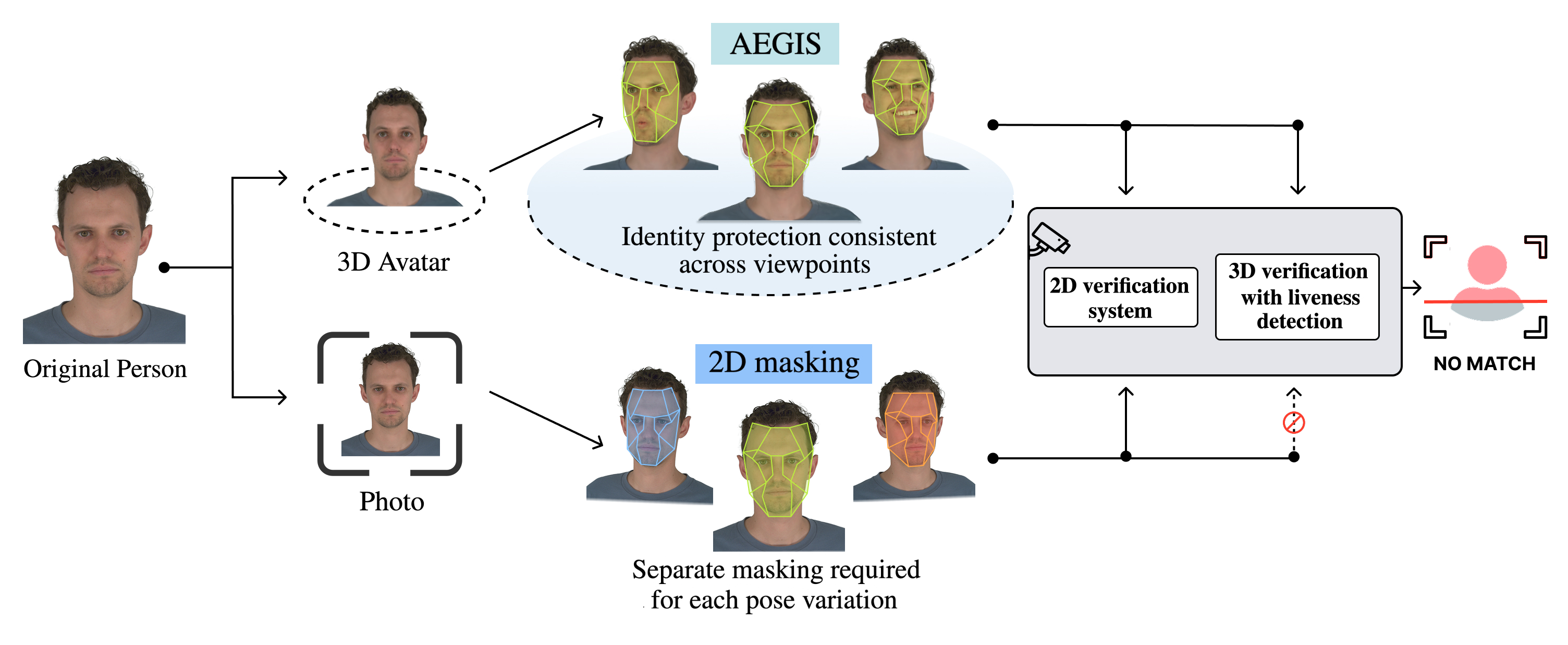

The growing adoption of photorealistic 3D facial avatars, particularly those utilizing efficient 3D Gaussian Splatting representations, introduces new risks of online identity theft, especially in systems that rely on biometric authentication. While effective adversarial masking methods have been developed for 2D images, a significant gap remains in achieving robust, viewpoint-consistent identity protection for dynamic 3D avatars. To address this, we present AEGIS, the first privacy-preserving identity masking framework for 3D Gaussian Avatars that maintains subject's perceived characteristics. Our method aims to conceal identity-related facial features while preserving the avatar’s perceptual realism and functional integrity. AEGIS applies adversarial perturbations to the Gaussian color coefficients, guided by a pre-trained face verification network, ensuring consistent protection across multiple viewpoints without retraining or modifying the avatar’s geometry. AEGIS achieves complete de-identification, reducing face retrieval and verification accuracy to 0%, while maintaining high perceptual quality (SSIM = 0.9555, PSNR = 35.52 dB). It also preserves key facial attributes such as age, race, gender, and emotion, demonstrating strong privacy protection with minimal visual distortion.

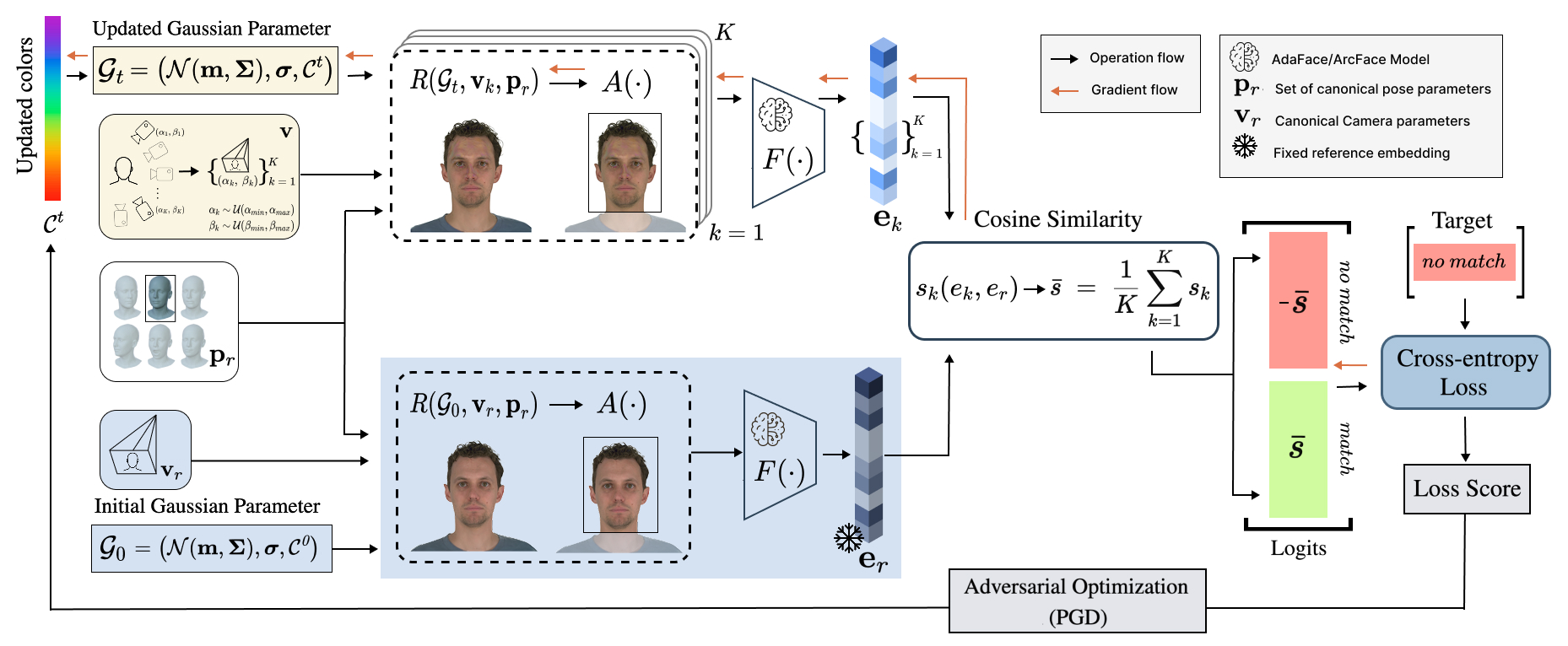

The process adversarially optimizes the color parameters \( \mathcal{C}^t \) of a 3D Gaussian avatar \( \mathcal{G} \) to evade face recognition by the model \( F(\cdot) \). A fixed reference embedding \( \mathbf{e}_r \) is first obtained by rendering the original avatar \( \mathcal{G}_0 \) under canonical camera \( \mathbf{v}_r \) and pose \( \mathbf{p}_r \) parameters, and passing the result through \( F(\cdot) \). During each PGD optimization step, a set of camera parameters \( \{ \mathbf{v}_k \}_{k=1}^K \) is sampled to capture diverse viewpoints. The updated avatar \( \mathcal{G}_t \) is rendered from these viewpoints using the rendering function \( R(\cdot) \) and alignment module \( A(\cdot) \), producing a batch of images. Identity embeddings \( \{\mathbf{e}_k\} \) are then extracted using \( F(\cdot) \), and their average cosine similarity \( \bar{s} \) with the reference embedding \( \mathbf{e}_r \) is computed. This similarity defines the logits ( \( \bar{s} \) for "match" and \( -\bar{s} \) for "no match"), from which a cross-entropy loss is computed while targeting the "no match" class. The resulting loss is backpropagated through the network using PGD to update the color parameters \( \mathcal{C}^t \), yielding an adversarial 3D representation.

Verification results obtained by the AdaFace system for the avatar protected using the AEGIS method with ϵ = 0.1, shown across various rotation angles and pose variations